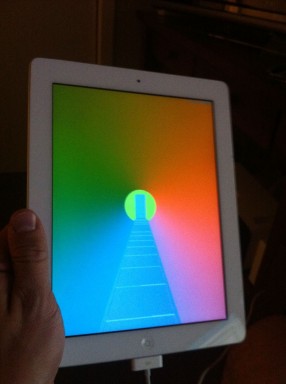

The iPad is one of the major platforms The Witness will appear on. Andy has been working on the iPad port and it is coming along. You can't actually play the game yet, but we probably are close to that. The gameplay doesn't know how to respond to a touch interface yet, though. It is important to me that this interface is very good, so I wanted to start designing for it now, to give me time to wrestle with difficult issues. Since the game runs already on Windows, it seemed natural to start designing the touch interface on Windows.

I am not sure that many people are going to use Windows for touch devices, at least in the Windows 8 timeframe. But at least some people will, so it'd be nice to have a high-quality touch interface built into the game on Windows.

With all this in mind, I started working on some Windows 8 touch hardware a couple of days ago. There is a message format that you can use in Windows 8 to get events from the touch hardware, WM_POINTER, but because I want the touch interface to work in Windows 7 as well, I wanted to use WM_TOUCH messages, which were introduced in Windows 7 and are supported in both operating systems.

It look me less than 20 minutes to get WM_POINTER working. It took many, many hours to get WM_TOUCH working, because WM_TOUCH is some kind of fully insane mess. None of the insanity seems to be documented, or discussed on the internet, so I am laying it out here in case anyone in the future stumbles into this same trap.

A WM_TOUCH message will never be returned by PeekMessage. Spy++ will tell you that WM_TOUCH is being sent to your application, but before the message is delivered, it gets stealthily converted into a mouse motion event. The type of this event will vary depending on whether you have raw input selected: if raw input, it will be WM_INPUT (0x00ff); if not, it will be WM_MOUSEMOTION (0x0200).

If your application handles these events, you may think it's reasonable to complete the handling without calling DefWindowProc. If you do this, you will never see a WM_TOUCH, because something buried down in DefWindowProc stealthily dispatches a WM_TOUCH to your application's windows when it sees a specially-marked WM_INPUT or WM_MOUSEMOVE.

Note that actually, you are supposed to always call DefWindowProc on WM_INPUT (for "cleanup" -- I don't know what this means and why they can't just clean it up when you get the next message -- but whatever); but WM_MOUSEMOVE has no such requirement, and if you believe you handled the event, it is natural *not* to call DefWindowProc. This will result in your never seeing WM_TOUCH. Strictly speaking our game had a bug, in that we were not calling DefWindowProc after WM_INPUT, but the bug was hard to find because it persisted when I tried turning off raw input, because we also were not calling DefWindowProc after WM_MOUSEMOVE (which as I mentioned is, in theory, totally fine.)

Important to note: WM_TOUCH gets dispatched to your windows without you dispatching it! You may think that you can log all messages after they come out of PeekMessage, and that you are in control of your entire message stream, but this is apparently not true. WM_TOUCH will just teleport itself into your window.

In addition to the mouse motion events, your application will get a bunch of other mouse events that are generated by the touch interface, to emulate mouse stuff for programs that don't understand touch. There is no way to tell Windows you don't want these events (unless you do Windows 8 stuff and respond to WM_POINTER; see below). According to Microsoft, the official way you are supposed to detect and ignore these messages is like this:

#define MOUSEEVENTF_FROMTOUCH 0xFF515700

if ((GetMessageExtraInfo() & MOUSEEVENTF_FROMTOUCH)

== MOUSEEVENTF_FROMTOUCH) {

// Skip message

}

I wish I were joking. It's been this way for years; Casey wrote about this in 2008.

This mask works for events like WM_LBUTTONDOWN, but it does not work for the mouse motion events e.g. WM_INPUT. If you want to take input both from touch and the mouse, you need to disambiguate the real WM_INPUTs from the fake ones. Through a process of experimentation with message flags, I landed on this:

if ((GetMessageExtraInfo() & 0x82) == 0x82) { /* ignore event */ }

It seems to work for now, but who knows if that will mysteriously break in some future version of windows.

Nicely, if your application handles WM_POINTER messages, you will not get any of this mouse emulation stuff or WM_TOUCH, so all this ugliness disappears. If it is okay to make Windows 8 your minimum specification, I recommend using WM_POINTER and staying far away from WM_TOUCH.